Moore's Law refers to a prediction made in 1965 by Gordon E. Moore, cofounder of Intel, that the number of transistors that can be packed into a computer processor of a given size should be expected to double every two years, while the cost of said computers is halved. When Moore made this postulation, he hadn't set out to create a law or predict a truism; nonetheless, time proved that not only was Moore's assumption accurate, but that the rate of doubling was increasing faster than he thought. Today, the doubling rate for transistor capacity is around 18 months. It has proven to be incredibly accurate over time, fueling rapid advancements in digital technology, from the personal computer revolution to the development of supercomputers and vast data centers. Moore's Law has been a guiding force in the semiconductor industry for planning research and development goals. In turn, it has led to the prevalence of affordable yet microscopic transistors that have shaped all facets of society. From consumer smartphones to weather forecasting to life-saving hospital equipment, every economic sector has seen improvements in productivity and efficiency due to the shrinking size of transistors. The cornerstone of Moore's Law is transistor scaling. Transistors, the basic building blocks of electronic devices, function as switches that control the flow of electrical current in a device. When Moore first announced his law, the process technology node (the size of the smallest transistor feature) was approximately 50 micrometers. Today, it is just a few nanometers, meaning more transistors can fit on a chip, thus increasing its computational power. This trend of miniaturization, commonly referred to as scaling, allows for the continuous improvement of digital electronic devices. It is a complex process that requires ongoing technological innovation, as every reduction in size presents new challenges for materials, design, fabrication, and quality control. The second core component of Moore's Law is the increasing density of integrated circuits. As the size of transistors decreases, more of them can be integrated onto a single chip. This increased integration density leads to higher performance and more functionality, enabling more complex and powerful computing devices. Increased integration density also leads to a reduction in the cost per transistor, as more transistors can be produced in the same amount of silicon wafer area. This has resulted in a dramatic decrease in the cost of computing power over time, making digital technology increasingly accessible and widespread. The third component of Moore's Law is the improvement in processing speed that comes with increasing transistor density. As more transistors are packed into a smaller area, the electrical signals have a shorter distance to travel. This means that the microchip can execute instructions more quickly, leading to faster data processing and higher performance. However, it's important to note that while Moore's Law predicts a doubling of transistor density, it doesn't necessarily equate to a doubling of processing speed. Other factors, such as software efficiency and architectural design, also play a role in determining a chip's overall performance. As it suggests, the continual increase in transistor density leads to a regular decrease in the cost per transistor. Consequently, the performance-to-price ratio of computing has improved dramatically over time. The cost of a given amount of computing power halves approximately every two years, making technology increasingly affordable for consumers and businesses. This trend has led to the proliferation of digital technology in all sectors of the economy, from manufacturing and healthcare to education and entertainment. It has also democratized access to technology, enabling even individuals and small businesses to take advantage of powerful computing capabilities. The semiconductor industry, at the heart of which lies the production of microchips, has grown exponentially due to the continuous march of Moore's Law. It has driven technological advancements and made possible the development of new products and industries, such as personal computers, smartphones, and cloud computing. The demand for more powerful, efficient, and smaller microchips has pushed the industry to innovate continuously. This has led to the creation of a wide range of specialized chips, designed for specific tasks like graphics processing, data center operations, and artificial intelligence, further fueling the growth of the industry. The advancement propelled by Moore's Law has also created countless jobs and contributed significantly to economic growth. The semiconductor industry itself employs millions of people worldwide in a wide range of roles, from research and development to manufacturing and sales. Beyond the semiconductor industry, the economic impact of Moore's Law extends to virtually all sectors of the economy. Affordable computing power drives tech industries, like software, e-commerce & telecommunication, fostering job growth and boosting economies. There is a limit to Moore's Law, however. As transistors approach the size of a single atom, their functionality begins to get compromised due to the particular behavior of electrons at that scale. In a 2005 interview, Moore himself stated that his law "can't continue forever." Most experts agree, stating that the physical limits of transistor technology should be reached sometime in the 2020s. One significant constraint is the physical limitations of transistor scaling. As transistors get smaller, they approach the atomic scale, where the laws of quantum physics start to interfere with their operation. Moreover, as transistors shrink, it becomes increasingly difficult to maintain their performance. Issues such as electron leakage and power density become more pronounced, potentially hindering further miniaturization and performance improvements. As more transistors are packed onto a chip, it becomes increasingly difficult to dissipate the heat they generate. Too much heat can damage the chip and degrade its performance, which poses a significant challenge for the design of high-performance computing devices. While various cooling solutions have been developed, from air cooling and liquid cooling to more exotic methods like quantum cooling, these only partially address the issue. Heat dissipation remains a major hurdle to further increases in transistor density and performance. Lastly, environmental concerns associated with semiconductor manufacturing pose a significant challenge to the continuation of Moore's Law. The production of microchips involves the use of hazardous chemicals and generates a significant amount of waste. Moreover, as chips become more powerful and widespread, they consume more electricity, contributing to global energy demand and associated environmental impacts. While progress has been made in making chips more energy-efficient, the environmental footprint of the semiconductor industry remains a significant concern. The continual improvement in computing power has enabled the development of increasingly sophisticated and powerful digital technology, from personal computers and smartphones to artificial intelligence and quantum computing. These advancements have transformed the way we work, learn, communicate, and entertain ourselves, ushering in the digital age. They have also enabled the development of entirely new industries and revolutionized existing ones, driving economic growth and societal progress. The continual doubling of transistor density has led to an exponential increase in computing power over time, enabling devices to perform tasks that were previously unthinkable. For instance, today's smartphones have more computing power than the supercomputers of just a few decades ago. This increased computing power has opened up new possibilities for software development, data analysis, and artificial intelligence, transforming the way we use and interact with technology. The regular increase in transistor density and corresponding decrease in cost per transistor has driven a continuous cycle of innovation in the industry. Companies must constantly develop new products and technologies to keep pace with the increasing performance and decreasing cost of microchips. This has led to a rapid pace of innovation and product cycles, characterized by continual product upgrades and the regular introduction of new and improved devices. However, exponential increases in computational technology might not end with traditional transistors. Quantum computing, for all intents and purposes, is not subject to many of the limitations of normal transistors. While household quantum computers are still a ways off, in April of 2020, Intel announced that they had successfully built a quantum computer that could operate at a cost of only a few thousand dollars, much less than the older models that cost millions. Moore's Law was originally a mere prediction by Gordon E. Moore in 1965 which has proven to be a guiding principle in the semiconductor industry. The relentless increase in transistor density has led to extraordinary advancements in computing power, driving the digital revolution and enabling countless technological innovations. This phenomenon has significantly impacted various sectors of the economy, fostering job creation and economic growth. However, Moore's Law faces challenges, primarily the physical limits of transistor scaling, heat dissipation, and environmental concerns related to semiconductor manufacturing. Despite these limitations, Moore's Law continues to inspire ongoing research and development, pushing the boundaries of computing technology. Looking ahead, quantum computing emerges as a potential avenue for surpassing the traditional transistor's limitations, offering promising opportunities for even more powerful computational advancements in the future.Moore's Law Definition

Define Moore's Law in Simple Terms

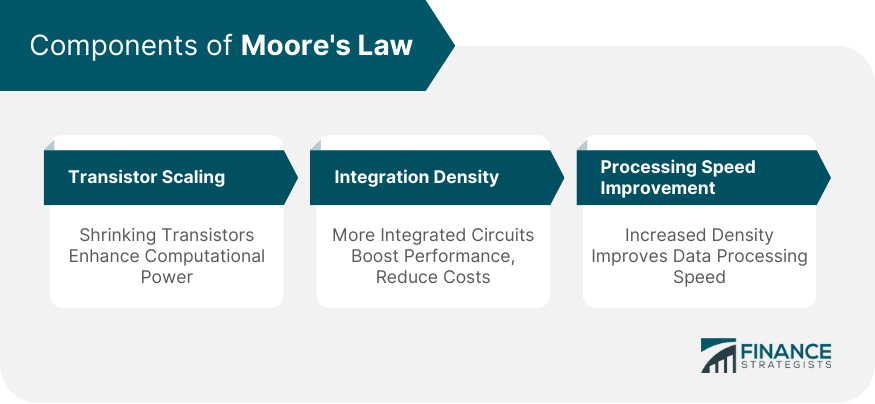

Components of Moore's Law

Transistor Scaling

Integration Density

Processing Speed Improvement

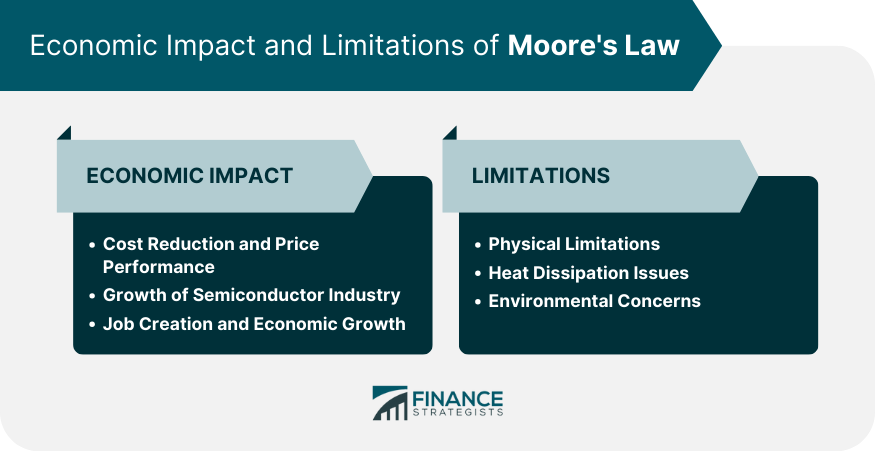

Economic Impact of Moore's Law

Cost Reduction and Price Performance

Growth of Semiconductor Industry

Job Creation and Economic Growth

Limitations of Moore's Law

Physical Limitations

Heat Dissipation Issues

Environmental Concerns

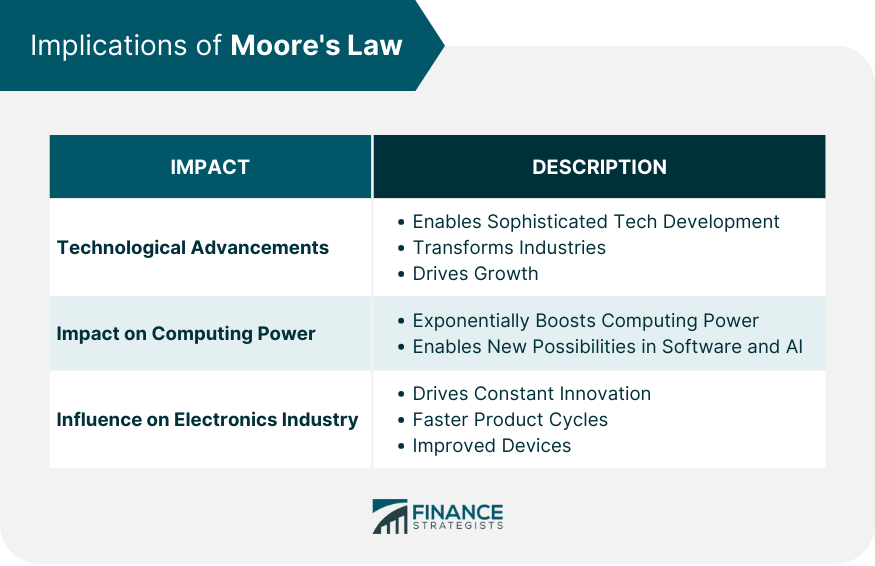

Implications of Moore's Law

Technological Advancements

Impact on Computing Power

Influence on Electronics Industry

Quantum Computing and Moore's Law

Conclusion

Moore's Law FAQs

Moore’s Law refers to a prediction made in 1965 by Gordon E. Moore, cofounder of Intel, that the number of transistors that can be packed into a computer processor of a given size should be expected to double every two years, while the cost of said computers is halved.

Moore’s Law has been a guiding force in the semiconductor industry for planning research and development goals.

There is a limit to Moore’s Law. Most experts agree that the physical limits of transistor technology should be reached sometime in the 2020s.

Moore's Law has led to the prevalence of affordable yet microscopic transistors that have shaped all facets of society.

Quantum computing, which is advancing every day - for all intents and purposes - is not subject to many of the limitations of normal transistors.

True Tamplin is a published author, public speaker, CEO of UpDigital, and founder of Finance Strategists.

True is a Certified Educator in Personal Finance (CEPF®), author of The Handy Financial Ratios Guide, a member of the Society for Advancing Business Editing and Writing, contributes to his financial education site, Finance Strategists, and has spoken to various financial communities such as the CFA Institute, as well as university students like his Alma mater, Biola University, where he received a bachelor of science in business and data analytics.

To learn more about True, visit his personal website or view his author profiles on Amazon, Nasdaq and Forbes.